Hypothesis

Any new project begins with an idea, undergoes development through information gathering and testing. The idea that initiated our analysis is relatively straightforward. Let us assume that it is possible to convert a satellite image into a 3D model of a map area. If this idea proves to be feasible, it has the potential to revolutionize urban planning, disaster response, and environmental management by enabling cost-effective and detailed 3D mapping of various terrains and landscapes.

This task is complex and consists of many steps, the first of which is obtaining the heights of buildings. Thus, a smaller hypothesis is taken to test:

“It is possible to predict the height of buildings relative to ground level from satellite imagery.”

Let’s test our idea

Dataset Creation

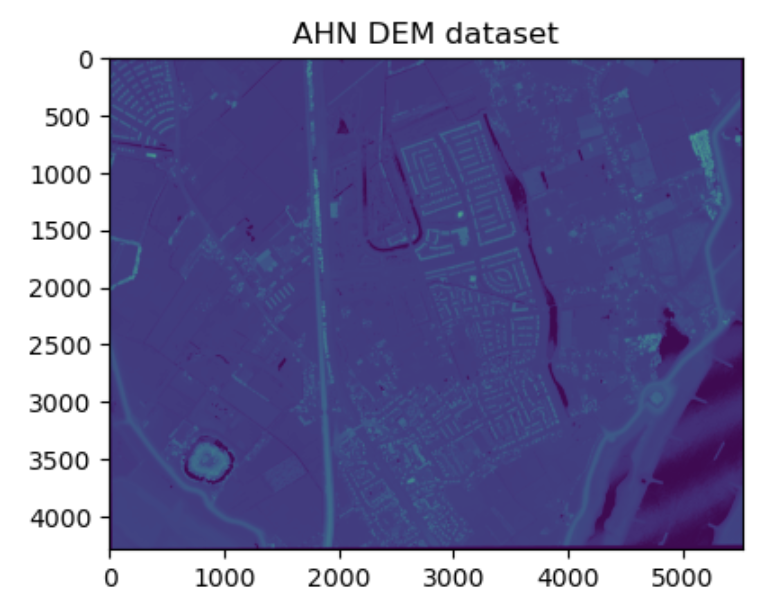

Any ML project begins with data. Since selecting all data at once is impossible, we will further divide the task and constrain it to rural areas with predominantly 1-2 storey buildings. For training the model, we need annotated building height data, and the AHN DEM dataset will assist us in this.

The AHN DEM is a 0.5m Digital Elevation Model (DEM) that covers the Netherlands. A free dataset of building heights and other elevated objects from Google Earth Engine is sufficient for prototyping a building height prediction system, without the need for additional steps such as categorizing various objects on the map.

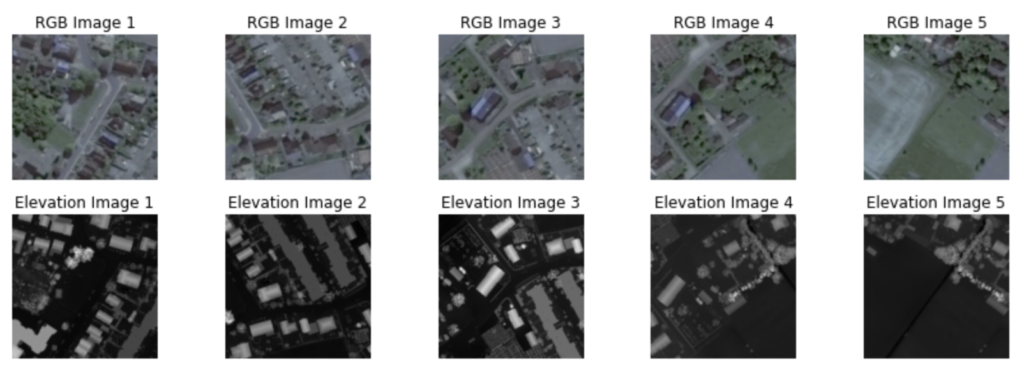

Next, to proceed with our analysis, we require satellite images that correspond to the same coordinates as the selected parts of the AHN DEM. For initial data, we selected five satellite images, each covering an area of approximately 1-1.5 kilometers in radius. Due to the limited data size, we opted for manual alignment, as it is a simpler and faster process.

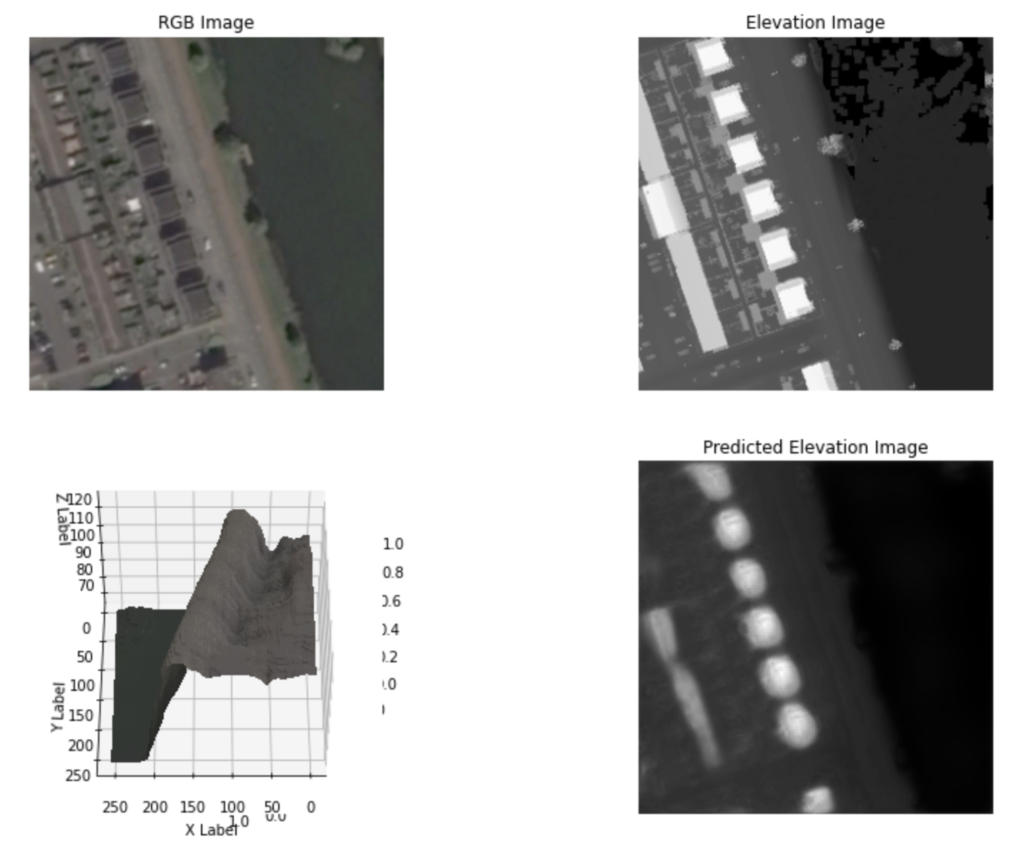

Here is how our dataset looks:

Satellite image on the left and visualization of corresponding elevation data on the right.

Dataset Preprocessing

To optimize the model’s performance and efficiently handle large images, the data was preprocessed by integrating a method that divided the high-resolution photos into smaller-sized images with a 50% overlay.

Additionally, flipping of images was used to enlarge the training dataset and improve the model’s ability to handle different spatial orientations.

Model Development

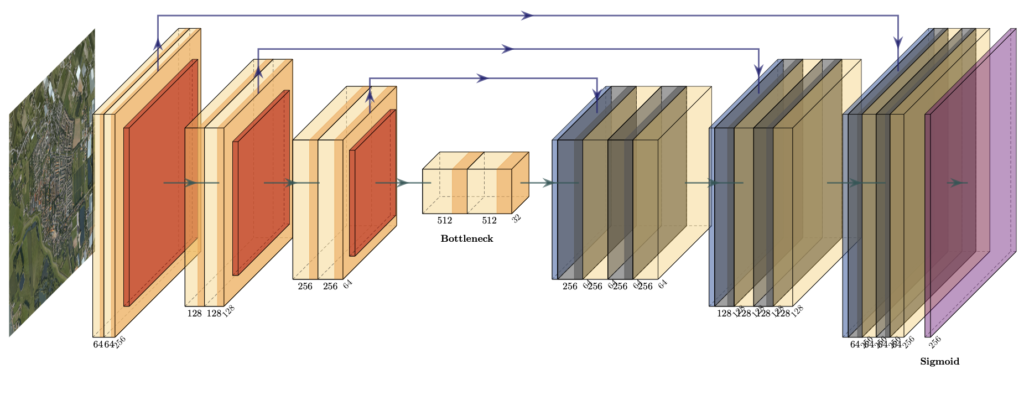

In this particular task, our focus lies on Image Segmentation for identifying buildings, plants, and other objects, coupled with a Regression component to estimate height values. The U-Net architecture is ideal for this purpose due to its proficiency in understanding intricate spatial relationships and adept handling of noise and occlusion. Given the emphasis on swift outcomes for the prototype, a relatively shallow neural network structure is preferred. During the training phase, utilizing Mean Squared Error (MSE) as the loss metric can help minimize outliers in the predictions, while Mean Absolute Error (MAE) serves as an intriguing business metric, offering insights into the average height prediction error.

Results

As a result, the MAE was observed to be approximately 1.5 meters. This level of accuracy is sufficient for real-world applications, such as generating 3D models from map images. As we can see, the predicted building heights are smooth and rounded. Our prototype demonstrates positive potential for real-world applications, with accurate building height predictions.

Combining the predicted building heights with the map image yields a 3D model:

The integration of a 3D model, generated from map data and our height predictions, provides a visual representation of our neural network’s capabilities. By comparing the model’s estimates to the actual landscape, readers can easily understand how well the network can discern spatial relationships in the dataset. This immersive display demonstrates the accuracy of our height predictions and the potential of our approach for real-world applications.