Introduction

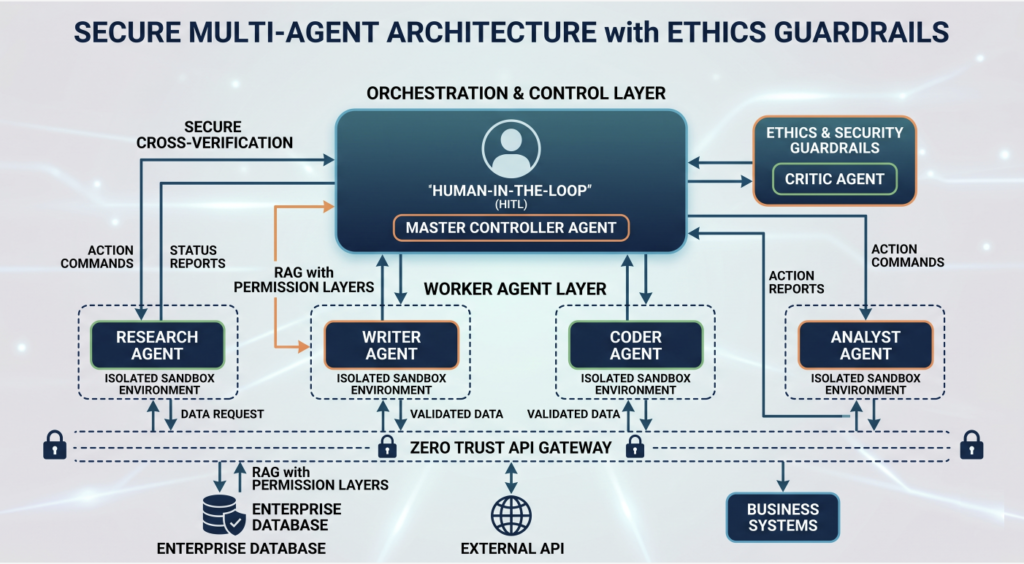

As the AI landscape shifts from single-purpose chatbots to complex Multi-Agent Systems (MAS), the potential for automation has reached unprecedented heights. At MindCraft, we are increasingly implementing architectures where specialized AI agents—researchers, writers, coders, and analysts—collaborate to solve end-to-end business problems. However, this “teamwork” introduces a new layer of complexity: how do we ensure these agents remain ethical, secure, and aligned with human intent?

The Challenge

The “Echo Chamber” and Agent Drift In a multi-agent environment, agents communicate with one another. A primary risk is the Feedback Loop Error, where a “hallucination” from one agent is accepted as fact by another, leading to a compounded error. Without strict ethical guardrails, autonomous agents might also prioritize efficiency over compliance, potentially bypassing security protocols to achieve a goal.

Key Pillars of Secure Multi-Agent Design

- Orchestration with “Human-in-the-Loop” (HITL) We believe that total autonomy is a risk. Our MAS frameworks include mandatory checkpoints where a human supervisor or a “Controller Agent” with higher-level ethical constraints reviews the output before it triggers a real-world action (like sending an email or executing code).

- Isolated Execution Environments (Sandboxing) Security is paramount when agents handle sensitive data. Each agent should operate within a “sandbox”—a restricted environment where it can perform tasks without having full access to the entire corporate infrastructure. This prevents a single compromised agent from affecting the whole system.

- Cross-Verification Protocols To combat hallucinations, we implement a “Critic-Actor” pattern. While one agent (the Actor) performs a task, a second, independent agent (the Critic) audits the result against a set of predefined ethical guidelines and factual databases.

Why Ethics is a Competitive Advantage

For enterprises, AI security isn’t just about preventing hacks; it’s about Trust. Clients and stakeholders need to know that the automated systems representing their brand operate within legal and ethical boundaries.

At MindCraft, we integrate RAG (Retrieval-Augmented Generation) with strict permission layers. This ensures that agents only access the data they are authorized to see, maintaining a “Zero Trust” policy within the AI ecosystem.

The Path Forward

The future of AI is collaborative, but it must be controlled. By building Multi-Agent Systems with “Security by Design,” we enable businesses to scale their operations without compromising on integrity.

________________________

Explore More: Browse our Blog and Solutions sections to discover more expert perspectives on the ever-evolving AI landscape.