In modern AI engineering, the primary challenge isn’t just model capability—it’s context management. As conversation depth and data volume increase, monolithic models often suffer from “context dilution,” leading to hallucinations and logic errors.

MindCraft addresses this by employing a hierarchical decomposition strategy: two “Super-Agents”—the AI Talent Matcher and the AI Technical Interviewer—which orchestrate their own internal ecosystems of specialized sub-agents.

Platform I

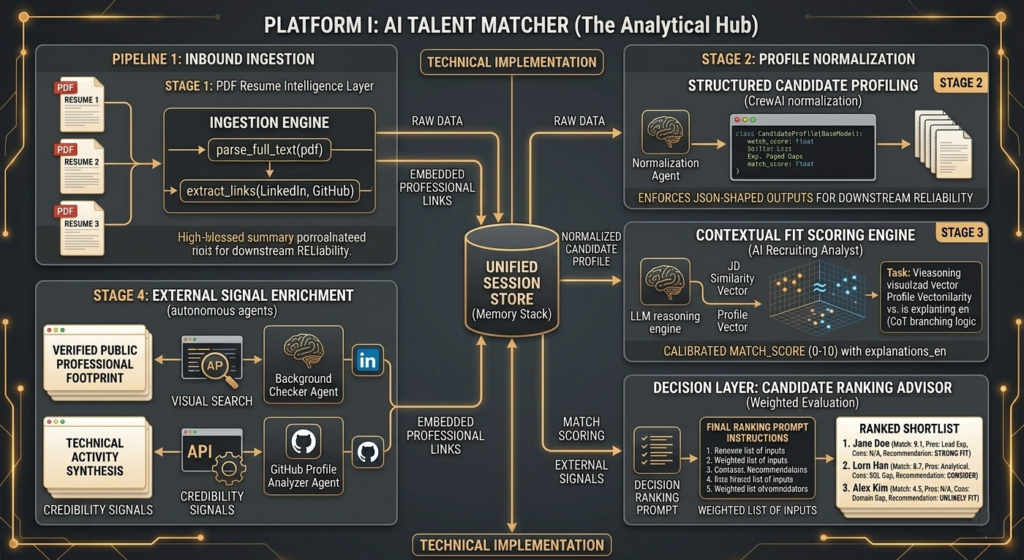

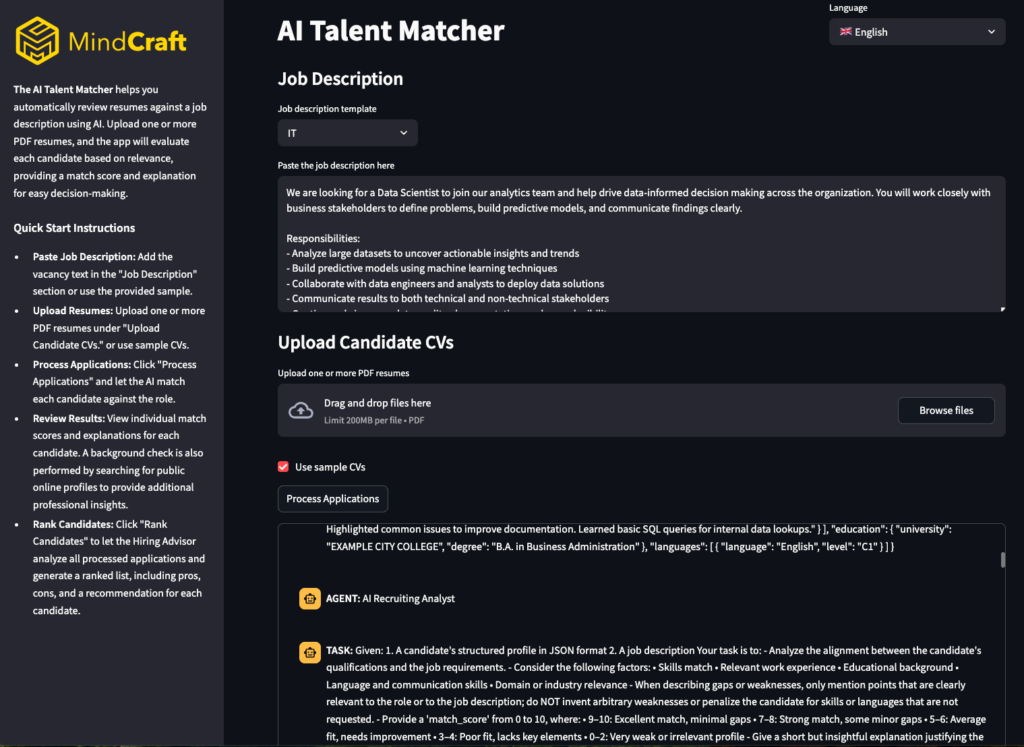

AI Talent Matcher (The Analytical Hub)

The AI Talent Matcher serves as the analytical core of the system, transforming raw resume PDFs into structured candidate intelligence and decision-ready hiring outputs.

Technical Implementation

- PDF Resume Intelligence Layer: Platform ingests uploaded resumes, extracts full text, and captures embedded professional links (e.g., LinkedIn/GitHub).

- Structured Candidate Profiling: A CrewAI agent chain converts parsed resume content into normalized candidate fields (name, role, skills context, experience indicators) and enforces JSON-shaped outputs for downstream reliability. This makes candidate data consistent, session-storable, and reusable across matching and ranking stages.

- Contextual Fit Scoring Engine: The AI Recruiting Analyst evaluates each candidate against the job description and returns a calibrated 0-10 match_score with justifications. Scoring is semantic and instruction-driven via LLM reasoning over profile/job context, rather than simple keyword overlap.

- External Signal Enrichment: Two specialized agents extend resume-only analysis: a Background Checker for verified public professional footprint, and a GitHub Profile Analyzer for technical activity synthesis. This enrichment provides credibility signals and hiring context beyond the CV.

- Decision Layer: Candidate Ranking Advisor: After screening, a dedicated advisor crew ranks candidates primarily by resume-job fit, then adds pros/cons and a recommendation label (Strong fit, Consider, Unlikely fit) to support fast, explainable shortlist decisions.

Implementation Example I

Agentic Orchestration Flow

To illustrate how Platform I handles the transition from raw data to a decision-ready profile, we use a structured agentic workflow. Below is a simplified implementation showing the handover between the Profiler and the Analyst:

Platform II

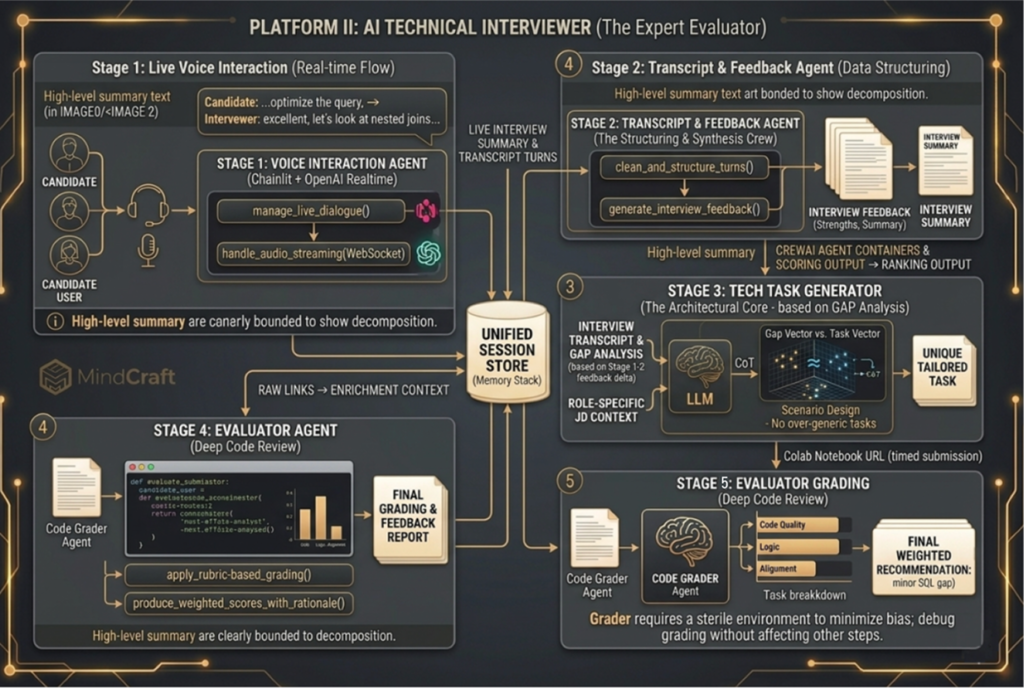

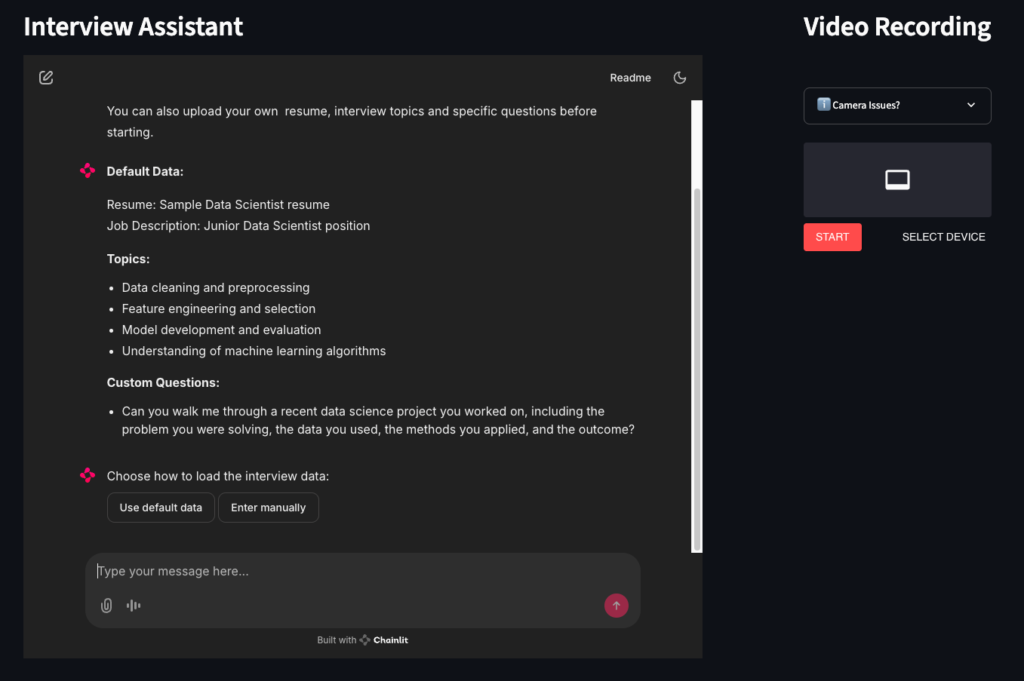

AI Technical Interviewer (The Expert Evaluator)

The Interviewer is a live, voice-enabled environment that runs the full technical verification cycle: interview, task assignment, and final evaluation.

Internal Ecosystem & Task Lifecycle

- Voice Interaction Agent: Runs the real-time interview through Chainlit + OpenAI Realtime, handling live dialogue, audio streaming, and interview flow control.

- Transcript & Feedback Agent: Structures and cleans transcript turns, then generates interview feedback (strengths, improvement areas, summary).

- Tech Task Generator: Requests a role-specific coding task from the task service, creates a Colab notebook, grants timed access, and schedules auto-evaluation.

- Evaluator Agent: Reviews the submitted notebook, applies a rubric-based LLM grading process, and produces weighted scores with a rationale.

Implementation Example II

Interviewer & Task Provisioning

This snippet illustrates how the system manages the transition from a live voice session to a practical coding task by automating the creation of a timed environment.

INTERVIEWER

TASK GENERATOR

Technical Stack Summary

| Super-Agent | Internal Sub-Agents | Key Technologies | Core Responsibility |

| AI Talent Matcher | Ingestion Engine, Normalization Agent, Recruiting Analyst, Background Checker, GitHub Analyzer | Pydantic, CrewAI, GitHub/Search APIs, Vector Embeddings | Transforming raw PDFs into structured, enriched, and ranked candidate intelligence. |

| AI Technical Interviewer | Voice Interaction Agent, Feedback Agent, Tech Task Generator, Evaluator Agent | WebSockets, OpenAI Realtime, Chainlit, Colab API, Rubric-based LLM Grading | Managing the end-to-end live assessment lifecycle: voice interview, custom tasking, and code review. |

Conclusion

Engineering Efficiency and the 80% Performance Leap

This architecture proves that the bottleneck in enterprise AI is contextual integrity, not raw model power. By implementing hierarchical decomposition, we isolate logic within specialized sub-agents, eliminating the reasoning decay inherent in monolithic LLMs.

Technically, this transforms recruitment into a deterministic engineering pipeline—from high-dimensional vector similarity in the Talent Matcher to low-latency WebSockets in the Technical Interviewer. From a business perspective, automating high-latency tasks like semantic parsing and real-time code review reduces manual recruiter workload by up to 80%. Nesting specialized intelligence within autonomous “Super-Agents” is the only viable path to achieving reliable, data-backed hiring decisions with maximum enterprise ROI.